AI FACTORIES

FOR THE AGE OF INFERENCE

Deploy private AI infrastructure in 120 days.

Hyperscalers, Neoclouds, AI Labs, and Governmentsneed a partner with the capabilities to deploy Inference at a global scale.

Global Deployment Fabric

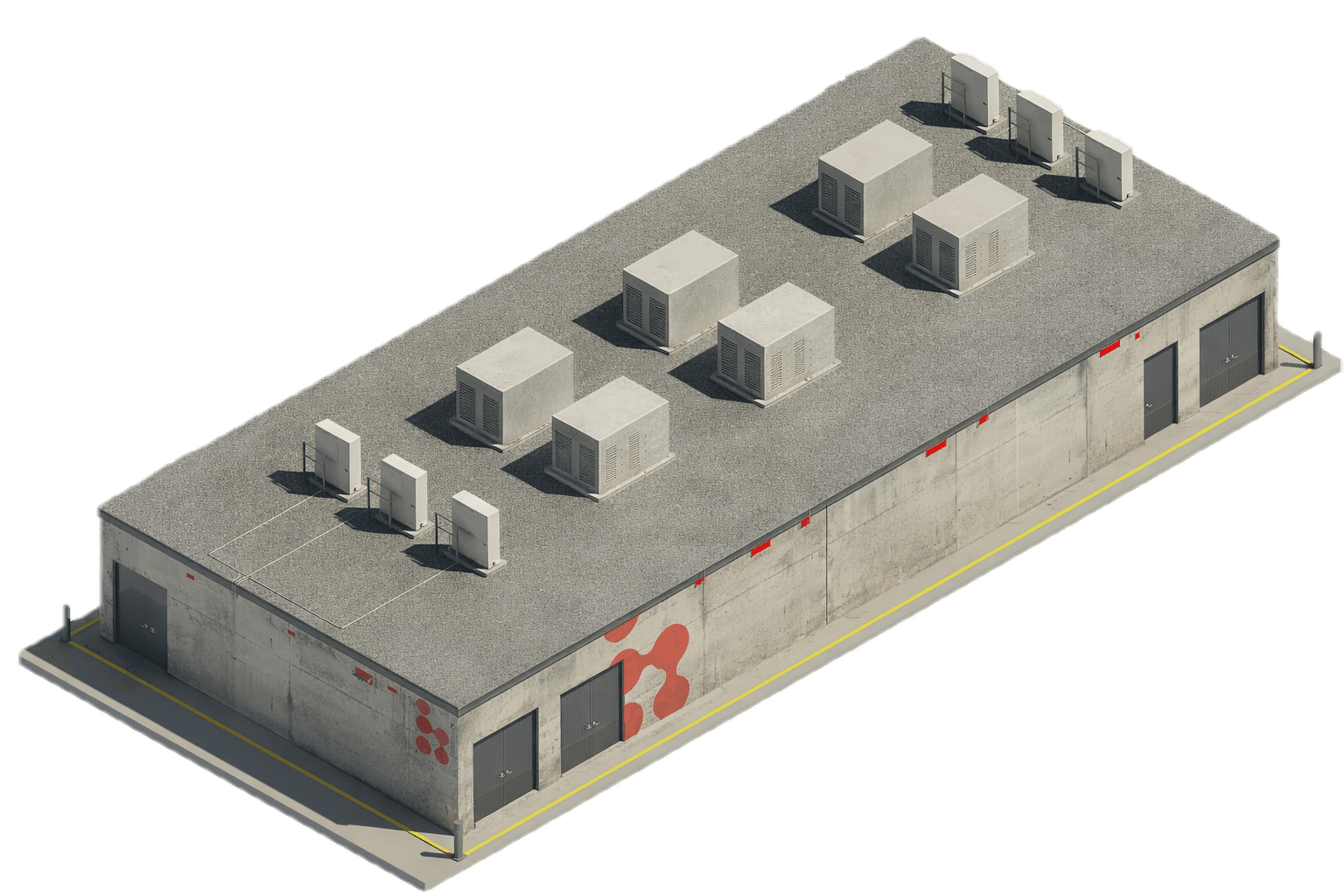

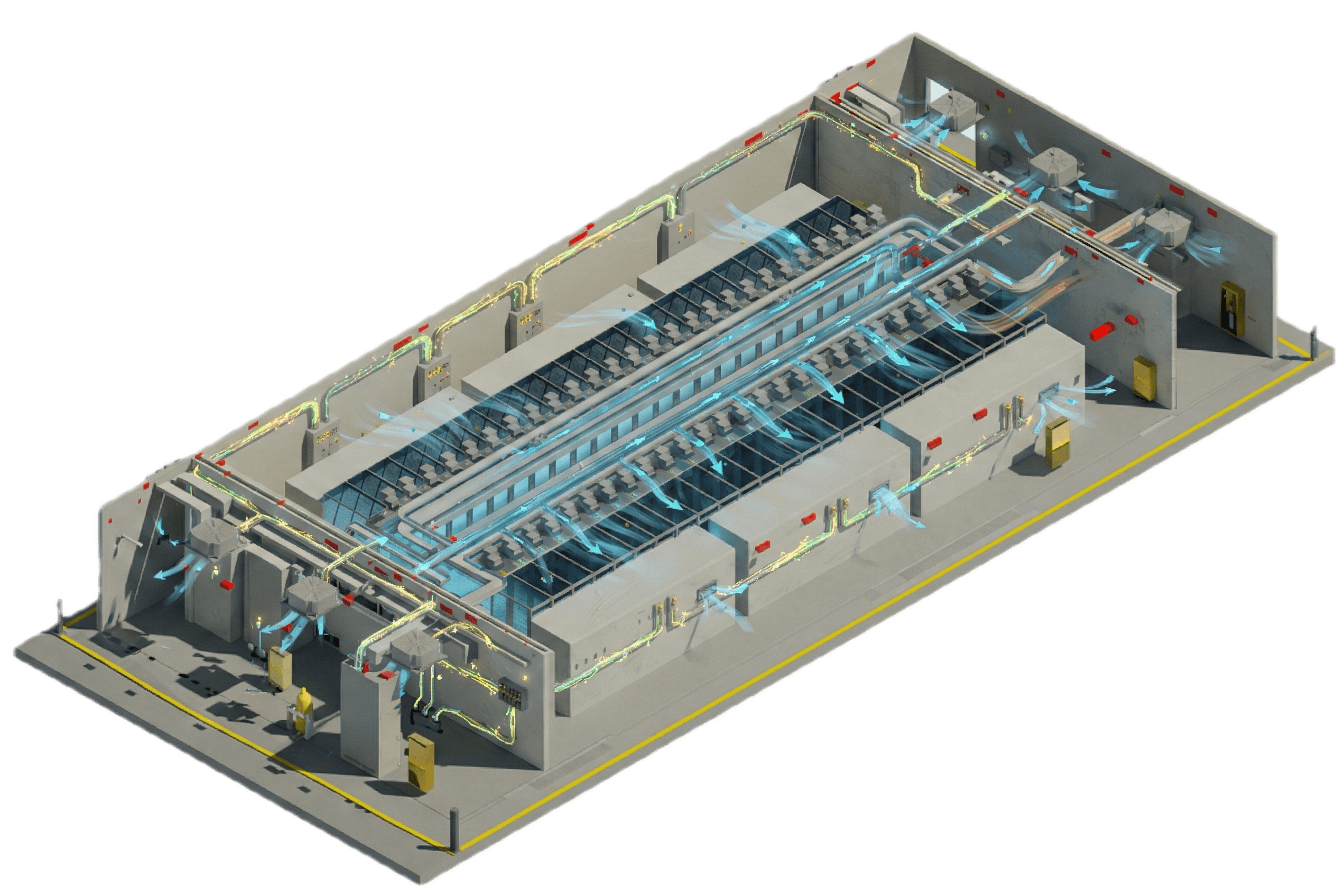

Presenting the

Bleeding Edge

AI Factory

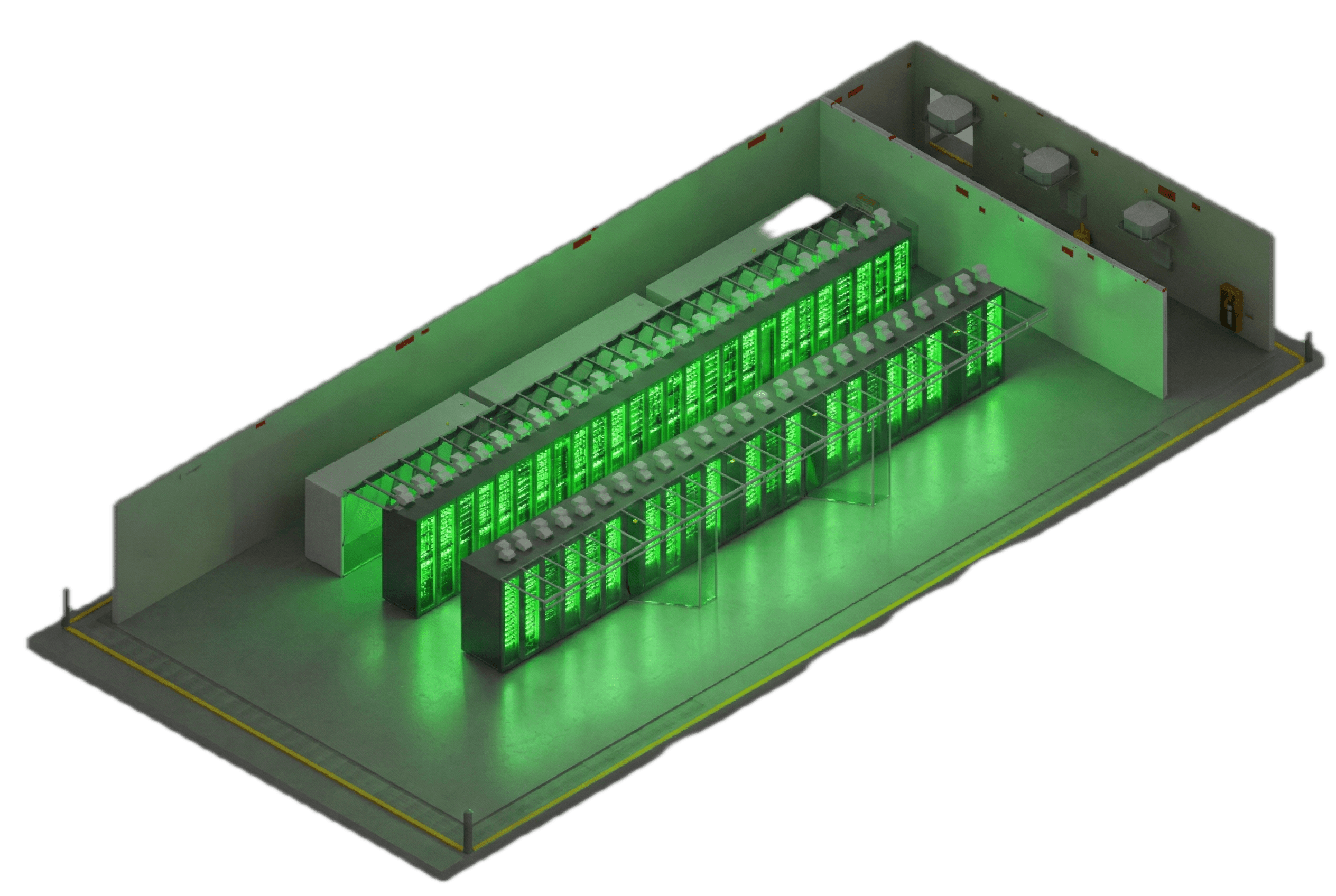

The modular infrastructure unit designed for inference deployment.

Use Cases

Production-grade AI infrastructure across real-world use cases.

Bleeding Edge AI Factories support multiple operational models, sovereign requirements, and distributed execution environments.

Zero-Trust Inference

Protecting both sides of AI: model IP and enterprise data.

Secure AI environments designed to protect both model IP and sensitive inference data. A continuous chain of trust - from the physical data center layer to the logical runtime environment - ensures strict isolation, verified execution, and controlled access to models during inference.

UPDATES

ICREA V Certified

QRO1 achieves ICREA Level V certification, the highest international standard for data center infrastructure.

Funding Round Closed

Bleeding Edge closes oversubscribed funding round to accelerate expansion across LATAM.

QRO1 Campus Live

Our flagship Querétaro data center is now fully operational with Tier V Reliability.